Famous FPGA Accelerators Powering Modern AI & Computing

A quick overview of the world's most famous FPGA accelerators powering modern AI, cloud computing, and high-performance workloads, with key features, use-cases, and comparison tables.

Field-Programmable Gate Arrays (FPGAs) have become a key hardware choice for accelerating AI, data analytics, and networking workloads due to their reconfigurable architecture and bit-level optimization capabilities. Their flexibility enables developers to customize hardware logic for high-performance tasks, making them ideal for sectors like cloud computing, telecom, HPC, and automotive applications. Unlike GPUs and ASICs, FPGAs can be reprogrammed anytime, giving them a unique advantage for rapidly evolving AI models and algorithms.

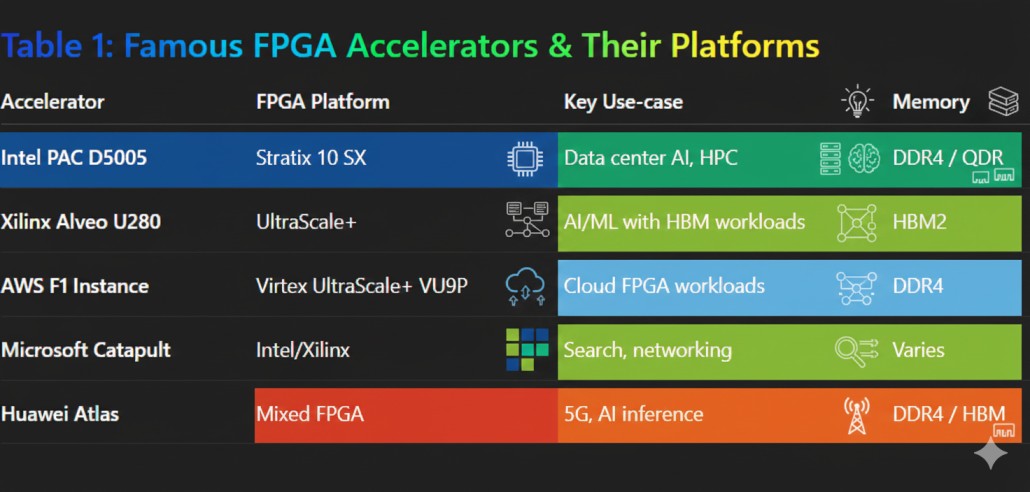

Leading Commercial Hardware: Enterprise and Data Center Accelerators

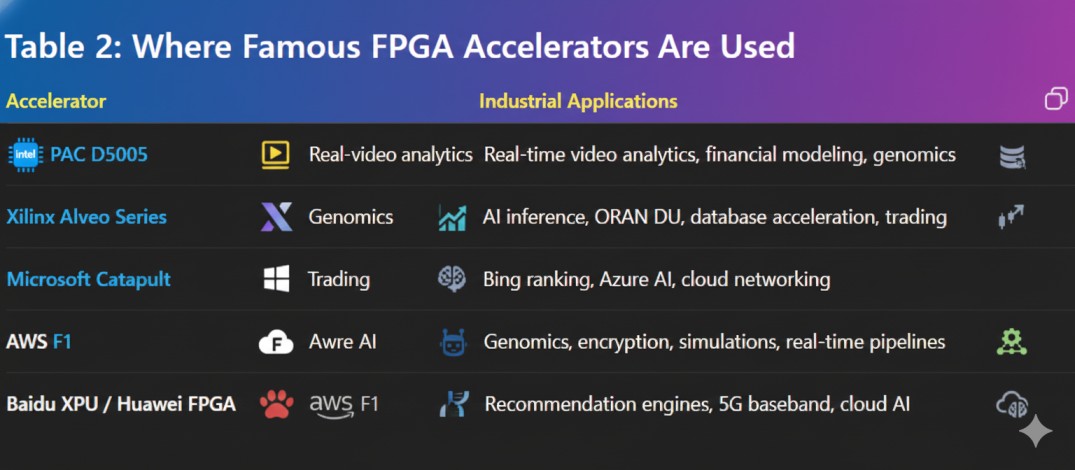

Among the most widely used FPGA accelerators is the Intel PAC D5005, built on the Stratix 10 SX FPGA with HyperFlex architecture, ARM processors, and high-bandwidth memory—used heavily in real-time analytics, financial modeling, and genomics. Similarly, AMD-Xilinx Alveo cards (U50, U200, U250, U280, U55C) dominate the data center market due to their UltraScale+ architecture, HBM2 memory, and Vitis AI integration, enabling ultra-low-latency AI inference, 5G acceleration, database search, and high-frequency trading

Hyperscaler Adoption: FPGAs at Massive Scale in the Cloud

Cloud hyperscalers have also embraced FPGA acceleration at massive scale. Microsoft Project Catapult is one of the world’s largest FPGA deployments, powering Bing search ranking, Azure AI inferencing, and ultra-fast networking through SmartNIC architectures. AWS F1 instances, using Xilinx VU9P FPGAs, allow developers to run custom logic in the cloud, enabling accelerated genomics, encryption, simulations, and real-time pipelines without needing physical hardware. These platforms helped democratize FPGA access across research, AI startups, and enterprise customers.

Global Innovation and Emerging Use Cases

Emerging players also leverage FPGAs in innovative ways. Groq, known for its Tensor Streaming Processor, relied heavily on FPGAs for early AI hardware prototyping, compiler validation, and ultra-low-latency inference experiments. Meanwhile, in Asia, companies like Baidu and Huawei utilize FPGA-based accelerators for recommendation engines, 5G baseband processing, and cloud-based AI inference. Huawei’s Atlas FPGA cards and Baidu’s XPU variants enable high-bandwidth, customized AI operations optimized for region-specific workloads.

Conclusion: The Enduring Role of FPGAs in Future Computing Systems

As AI and big data workloads continue to grow, FPGAs remain an essential part of high-performance computing due to their adaptability, low latency, and ability to accelerate specialized algorithms. From Intel PAC and Alveo cards to AWS F1 and Azure Catapult, FPGA accelerators support diverse applications across cloud, edge, networking, and analytics. Their balance of flexibility and performance ensures that FPGAs will continue to power next-generation computing systems.